Sunday marked the ten year anniversary of the Virginia Tech massacre, in which 32 students and faculty members were murdered.

The killer that day paused in between shootings to mail to NBC News a manifesto in which he attempted to rationalize the attack. It arrived two days later.

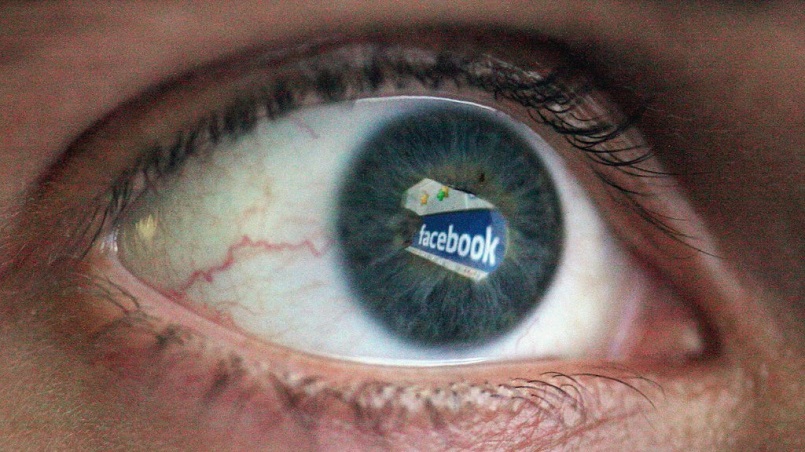

Ten years later, murderers can just get on Facebook Live and attempt to rationalize their actions to their Facebook friends in real time.

That's what happened on Sunday afternoon. The shooting of an elderly man in Cleveland -- by a suspect who held his phone in one hand and a gun in the other -- caused a manhunt that was still underway a day later.

The suspect recorded the shooting and uploaded it to Facebook. Then he logged onto Facebook Live, the social network's relatively new live-streaming tool, and described the attack.

The suspect also posted status updates and tagged various friends in them. He claimed that he killed 15 other people, but police have not found evidence of any additional victims. His Facebook page was removed -- but not for "several hours" after the attack, according to The Verge.

The shooting has renewed a set of questions about Facebook's multiple roles and responsibilities in both the virtual world and the real world.

Among them: Should the company be doing more to identify and remove violent videos more quickly? Do live-streaming capabilities cause more harm than good?

Other Silicon Valley giants, like Google's YouTube, have also come under scrutiny as users have publicized and shared crimes on social networks.

But Facebook's sheer ubiquity has put the company front and center.

"Since its launch, Live has provided an unedited look at police shootings, rape, torture, and enough suicides that Facebook will be integrating real-time suicide prevention tools into the platform," Wired's Emily Dreyfuss wrote Sunday. "And though murders have been captured by witnesses on Facebook Live—and people have even been killed as they were streaming to the service—this appears to be the first time a killer has streamed themselves preparing to commit a homicide, and then uploading the act itself, as happened" in Cleveland.

Copies of the shooting video have circulated on Facebook, Twitter and other sites. The tech companies have algorithms and teams of human editors who are constantly removing graphic and disturbing material.

On Sunday afternoon, Facebook said in a statement, "This is a horrific crime and we do not allow this kind of content on Facebook. We work hard to keep a safe environment on Facebook, and are in touch with law enforcement in emergencies when there are direct threats to physical safety."

The company said it had no further updates on Monday.